In this, the 500th post on the Lawrence Economics Blog, we bring you a story from the NYT on the statistical value of life. Indeed, as anyone in an environmental economics or policy course knows, the “value” placed on saving a statistical life (VSL) is associated with reductions in risk levels that decrease the probability of being killed (i.e., from reducing the number of purple balls in your urn).

In this, the 500th post on the Lawrence Economics Blog, we bring you a story from the NYT on the statistical value of life. Indeed, as anyone in an environmental economics or policy course knows, the “value” placed on saving a statistical life (VSL) is associated with reductions in risk levels that decrease the probability of being killed (i.e., from reducing the number of purple balls in your urn).

This VSL is pivotal in determining the benefits of many non-economic regulations, and many federal agencies have increased the value used in benefit assessment in the past few years.

The Environmental Protection Agency set the value of a life at $9.1 million last year in proposing tighter restrictions on air pollution. The agency used numbers as low as $6.8 million during the George W. Bush administration.

The Food and Drug Administration declared that life was worth $7.9 million last year, up from $5 million in 2008, in proposing warning labels on cigarette packages featuring images of cancer victims.

The Transportation Department has used values of around $6 million to justify recent decisions to impose regulations that the Bush administration had rejected as too expensive, like requiring stronger roofs on cars.

That is the salient point of the article; the rest mostly gets down to talking about the prospects and problems of using VSLs in the first place. If you are reading this, you probably know already.

It is a good couple of weeks for those interested in the economics of (and innovation in) illicit drug markets. First, HBO started up its mega super miniseries,

It is a good couple of weeks for those interested in the economics of (and innovation in) illicit drug markets. First, HBO started up its mega super miniseries,  Almost unnoticed, this week marks a terrible week for advocates of market solutions to environmental problems, including various cap-and-trade systems. The Wall Street Journal

Almost unnoticed, this week marks a terrible week for advocates of market solutions to environmental problems, including various cap-and-trade systems. The Wall Street Journal  “The safer they make the cars, the more risks the driver is willing to take. It’s called the Peltzman effect.” — Some CSI Episode

“The safer they make the cars, the more risks the driver is willing to take. It’s called the Peltzman effect.” — Some CSI Episode

That’s the

That’s the  The

The

… is certainly worth a barrel of cure. Instead of having these guys with big yellow boots (I thought only 4-year old boys ran around in public in galoshes out of season), perhaps it would pay to have more egghead types crunching data on safety risk. That was the message I gave in both my classes this week, as we sat down to read Shultz and Fischbeck’s “

… is certainly worth a barrel of cure. Instead of having these guys with big yellow boots (I thought only 4-year old boys ran around in public in galoshes out of season), perhaps it would pay to have more egghead types crunching data on safety risk. That was the message I gave in both my classes this week, as we sat down to read Shultz and Fischbeck’s “

Just don’t try to sell the kits at Walgreens.

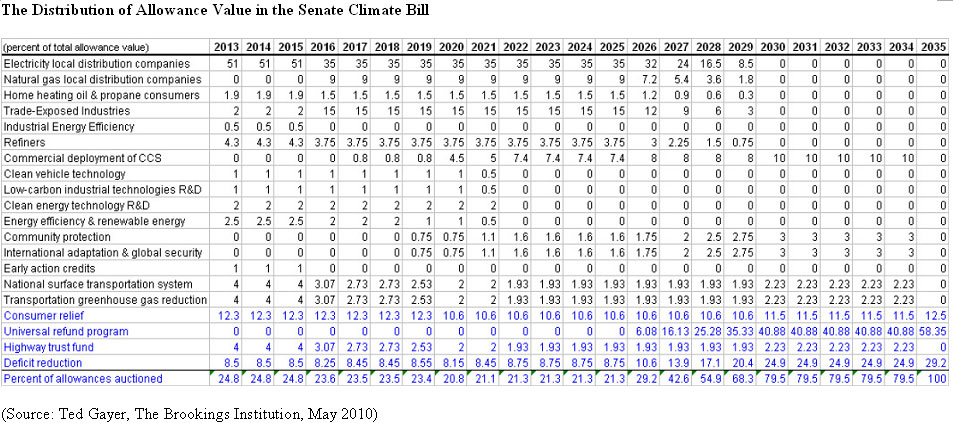

Just don’t try to sell the kits at Walgreens. On the other side of the pond, there actually is a cap & trade system in place, and it is really all over the price. Carbon prices have ranged from €8 to €30, and the volatility can stymie long-term investments. In other words, there is likely to be an inverse relationship between carbon prices and the payoff to greener (or at least lower-carbon) energy sources. If investors don’t believe that carbon prices will be high, then green investments simply won’t be as attractive.

On the other side of the pond, there actually is a cap & trade system in place, and it is really all over the price. Carbon prices have ranged from €8 to €30, and the volatility can stymie long-term investments. In other words, there is likely to be an inverse relationship between carbon prices and the payoff to greener (or at least lower-carbon) energy sources. If investors don’t believe that carbon prices will be high, then green investments simply won’t be as attractive. That’s the answer. The question is from

That’s the answer. The question is from  So that gets us back to the original question, which is, should we think about the regulatory framework for the current oil spill fiasco in terms of regulating some sort of risk or internalizing an externality? And, does it make a difference which approach we take in terms of the types of regulations we would want?

So that gets us back to the original question, which is, should we think about the regulatory framework for the current oil spill fiasco in terms of regulating some sort of risk or internalizing an externality? And, does it make a difference which approach we take in terms of the types of regulations we would want?